conversational

debugging

Forget dashboards. Ask questions in plain language and get answers with root causes, visualizations, and fixes. No query language required.

Ask anything

Error investigation

Ask plain-language questions to surface and filter specific errors across services, without manually digging through logs.

Change correlation

Connect recent deployments or config changes to performance shifts, making it easy to spot what triggered an incident.

Root cause analysis

Trace failures back to their origin by following the chain of dependencies upstream from where the error surfaces.

Impact assessment

Instantly understand the blast radius of a bug: how many users, requests, and regions are affected right now.

Trend analysis

Visualize how metrics like error rates or memory usage behave over time to catch patterns before they become incidents.

System diagnostics

Visualize how metrics like error rates or memory usage behave over time to catch patterns before they become incidents.

Error investigation

Ask plain-language questions to surface and filter specific errors across services, without manually digging through logs.

Change correlation

Connect recent deployments or config changes to performance shifts, making it easy to spot what triggered an incident.

Root cause analysis

Trace failures back to their origin by following the chain of dependencies upstream from where the error surfaces.

Impact assessment

Instantly understand the blast radius of a bug: how many users, requests, and regions are affected right now.

Trend analysis

Visualize how metrics like error rates or memory usage behave over time to catch patterns before they become incidents.

System diagnostics

Visualize how metrics like error rates or memory usage behave over time to catch patterns before they become incidents.

28% are INVALID_POSTAL_CODE in

PaymentForm.tsx:89.5% are NETWORK_ERROR timeouts to api.stripe.com in ap-southeast-1.

Deploy

order-service v1.8.3 added eager loading on /orders — query count went from 3 to 47.Feature flag

new-inventory-check enabled for 100% of traffic, adding an external API call per checkout.webhook-workerfailing to POSToutbound-proxyreturning 503nat-gatewayhit 65,535 port limit- connection leak in

email-service

3 regions affected: us-east-1 (89%), eu-west-1 (8%), ap-northeast-1 (3%).

~$14,200 in blocked checkout attempts. Enterprise accounts using SSO bypass are unaffected.

Current: 1.8GB average, growing ~50MB/hour until OOM restart every ~6 hours.

Leak likely introduced in

v2.3.0 (deployed March 8) — correlates with new caching layer in SearchService.Impact assessment

Instantly understand the blast radius of a bug: how many users, requests, and regions are affected right now.

Trend analysis

Visualize how metrics like error rates or memory usage behave over time to catch patterns before they become incidents.

System diagnostics

Visualize how metrics like error rates or memory usage behave over time to catch patterns before they become incidents.

Seeing is

believing

Ask your stack what's broken, slow, or acting weird, in plain language. The system correlates logs, metrics, and traces to answer back with causes.

Accelerate your insight

Sazabi doesn’t dump a full dashboard on you. Every response renders exactly the components your question requires — nothing more, nothing less.

The right chart, always

The agent picks the right visualization after investigating. A latency question gets a chart. An impacted users question gets a table.

Dynamic and interactive

Any chart, table, or diagram Sazabi generates can be shared instantly with your team. No exporting, no screenshots, just send the conversation.

Complete

context

Beyond the conversation. Sazabi builds a deeper understanding of your system with every interaction.

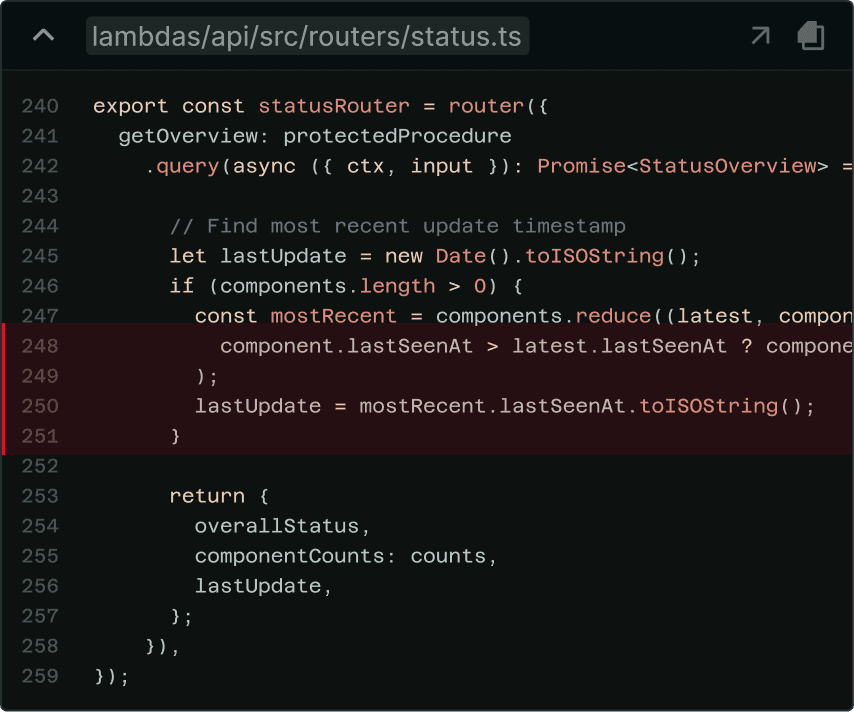

Code Search

Sazabi understands your repositories, services, and architecture, not just your logs. Ask questions that reference your actual code and get answers that make sense in context.

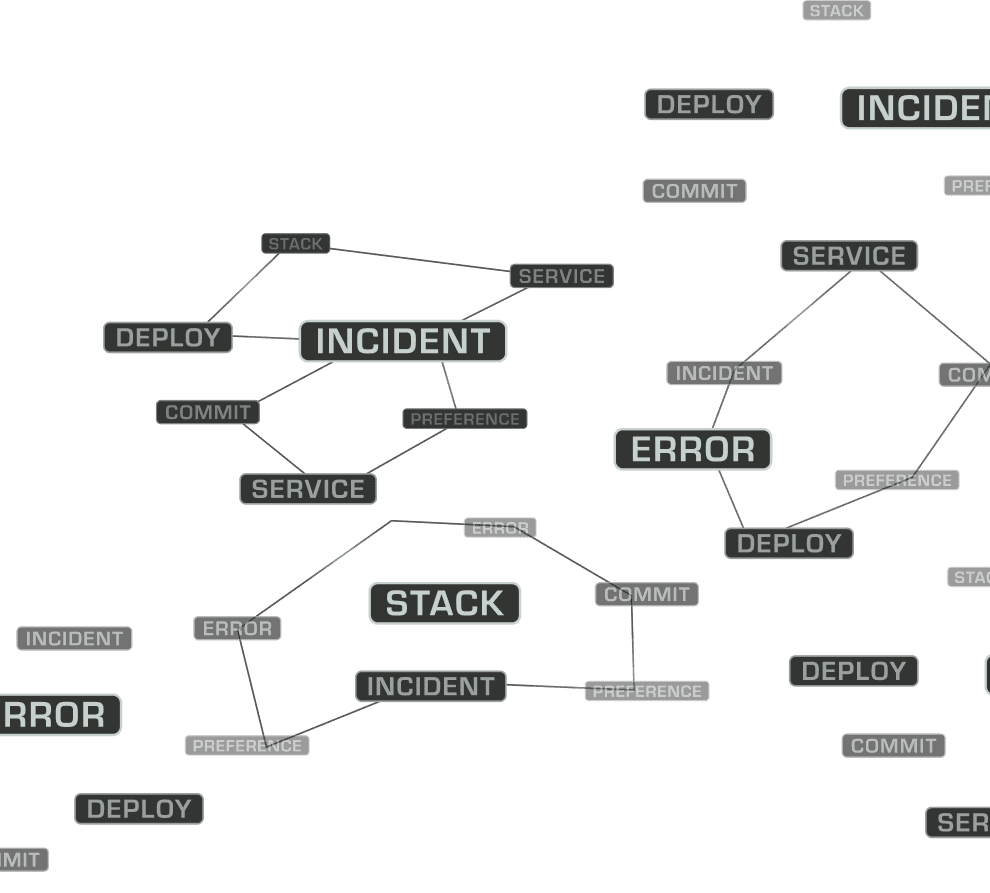

Perfect Memory

Sazabi remembers past incidents, deployments, errors, and your team’s preferences over time. The more you use it, the more it understands your system.

Integrations

Sazabi connects to the tools your team already uses, from code hosting to communication platforms. No new workflows, just deeper answers from the context you already have.