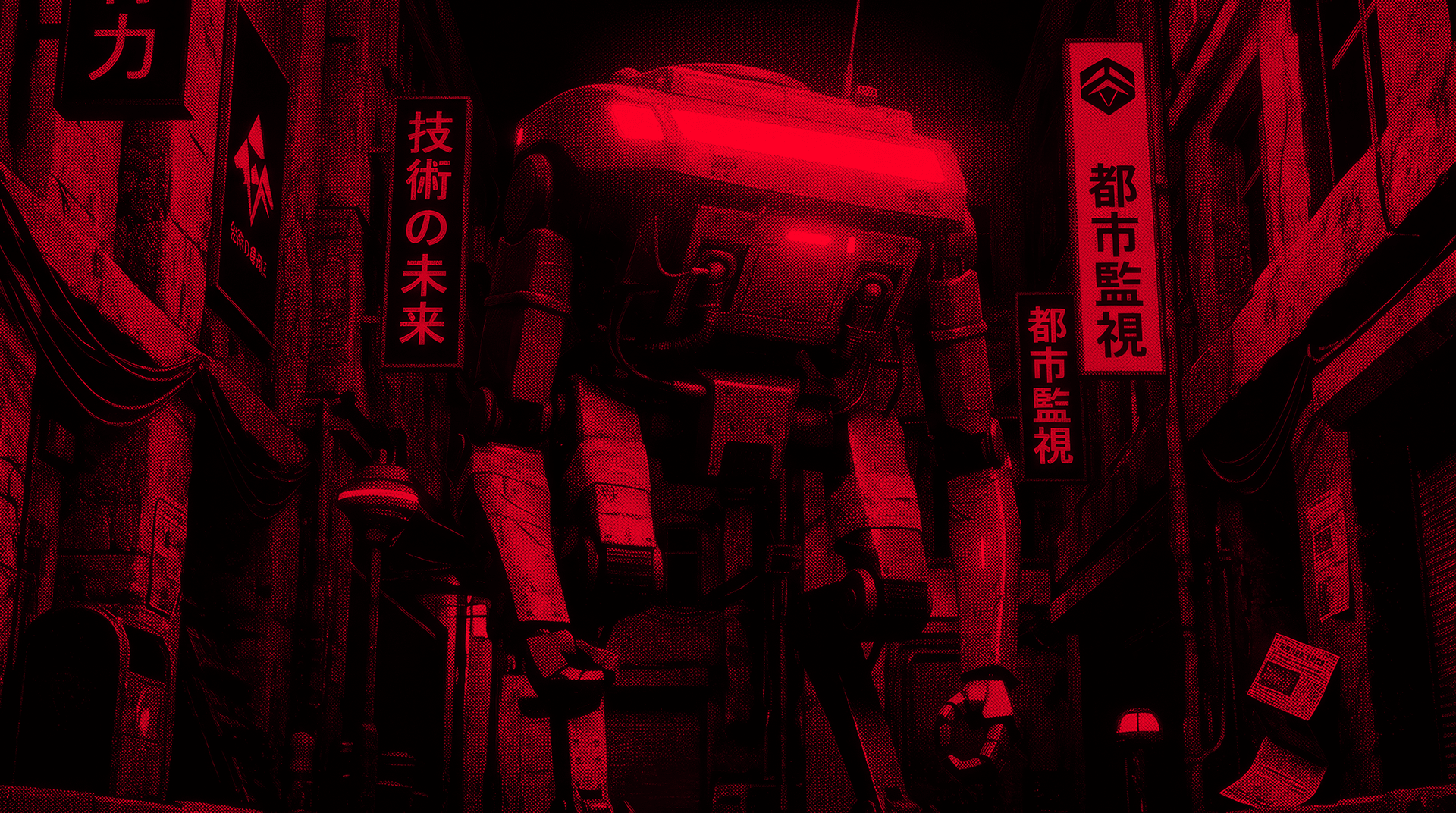

The Sazabi Manifesto

AI has changed what's possible in software systems. Observability hasn't caught up.

In 2026, production systems evolve continuously, behave probabilistically, and change faster than humans can track. Yet the tools we use to understand them are still rooted in assumptions from a bygone era.

Something has to change. This manifesto lays out a path forward.

1. Less is More

Modern observability is bloated.

Over the past decade, observability platforms have accumulated hundreds of features, modules, dashboards, and configuration options. While these elements look impressive in demos, they rarely lead to more reliable systems. More often, they do the opposite by creating a steep learning curve and increasing cognitive load.

The cost is felt everywhere. Junior engineers struggle to make sense of sprawling UIs. Non-technical teammates are effectively locked out, unable to answer basic questions about production. Incident responders are flooded with information during high-stress situations.

Observability vendors promised clarity. What we got was complexity.

The DevOps movement gained traction around a simple idea: the people who build software should also operate it. But observability tools aren't built for product engineers. They are designed for platform specialists with deep expertise in infrastructure, databases, and distributed systems. This is unfair.

Product engineers don't want more observability bells and whistles. They want answers:

- Why is production down?

- Who is affected?

- What changed?

- How do we fix it right now?

And if observability is fundamentally about answering questions, it follows that the best UI for observability is chat.

We don't make observability better by adding more features. We make it better by cutting back.

Less is more.

2. Logs Are All You Need

For years, observability has been defined by the "Three Pillars": logs, metrics, and traces. The belief is that all three are required to understand production systems.

This idea is outdated.

Metrics and traces are powerful in theory but brittle in practice. Instrumentation is complex and error-prone. Aggregations are confusing and often hide real problems. Flame graphs look sophisticated but are unintuitive for most people.

Logs are different.

Everyone knows how to write a log line. Everyone knows how to read stdout. Logs match how humans naturally reason about systems: what happened, in what order, and with what context.

On a fundamental level, logs, metrics, and traces share the same underlying DNA: events. Metrics are aggregated events. Traces are collections of start and end events. Logs are events masquerading under a different name. The implications of this are profound: with logs, you can reconstruct metrics and traces, giving you three "pillars" for the price of one.

Historically, logs were dismissed because they were unstructured. Today, that weakness is a strength. AI excels at processing large volumes of unstructured text. It can surface patterns, identify anomalies, and extract meaning from natural language at scale. Metrics and traces could never hope to be as rich.

Logs have challenges, too. Cost and availability are chief among them. But these aren't insurmountable. Cost can be addressed with summarization, compression, tiering, and intelligent retention. Availability can be improved through automatic instrumentation: AI that adds meaningful log lines directly to code.

The Bitter Lesson taught us that AI progress doesn't come from clever hacks. It comes from simple, scalable primitives. A similar rule applies to observability.

Logs are all you need.

3. Monitoring Is Dead

Before observability, there was monitoring. Monitoring is about detection: predefined thresholds on CPU, memory, or other signals. Observability is about understanding: the ability to explore systems and explain failures.

Today, software teams think of monitoring as a necessary evil — a painful but essential part of running production systems. But this consensus doesn't change the truth: monitoring is fundamentally broken.

Ask any engineer, and you'll hear the same complaints:

- Monitors are tedious to create and maintain.

- They require constant tuning.

- They misfire.

- They page the wrong people at the wrong time.

- Worst of all, they train teams to ignore alerts that sometimes represent real customer pain.

These tradeoffs might be acceptable if monitors reliably caught issues. They don't.

Monitoring fails because it's reactive. Teams create monitors after incidents, not before. Meanwhile, the systems they manage are complex, constantly changing, and rarely fail the same way twice.

We're playing a losing game. We don't need better monitors. We need a new paradigm.

The future is Autonomous Alerts: AI that continuously watches your app in production, investigates issues, and only escalates when absolutely necessary.

No more static monitors. No more false positives. No more endless toil.

Just comprehensive coverage out-of-the-box and smart, actionable alerts.

Monitoring is finally dead.

Introducing: Sazabi

Sazabi is an AI-native observability platform built on these principles.

But it's also more than a product. It's a new philosophy for software reliability in the AI era.

Less noise. Less overhead. Less complexity.

More clarity. More confidence. More speed.

If this way of thinking resonates with you, come build with us.